AI expertise for consumer insights

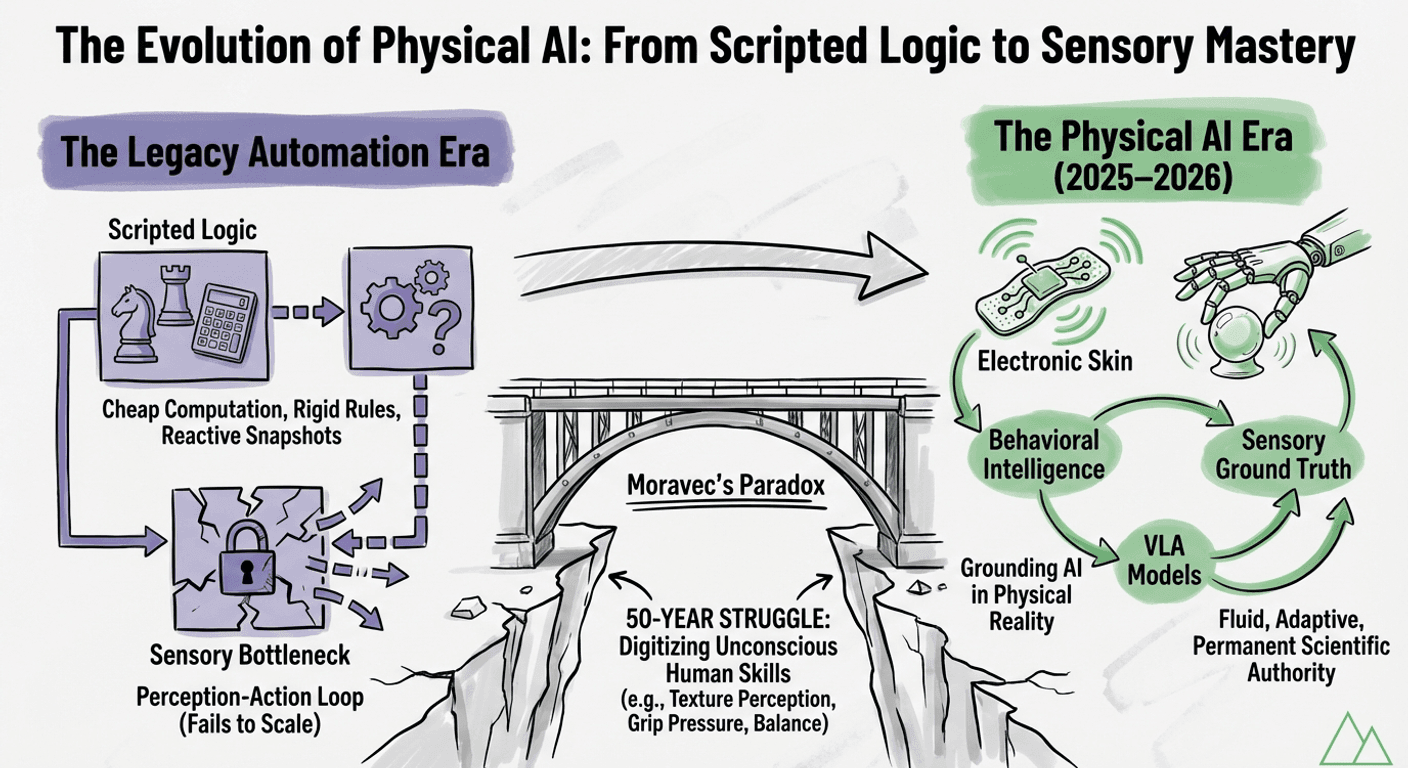

The transition between 2025 and 2026 represents a definitive inflection point in the history of artificial intelligence. For decades, the industry has operated under the shadow of Moravec’s Paradox: the observation that high level cognitive tasks are computationally inexpensive, while low-level sensorimotor skills, the foundational movements of a human child, are extraordinarily difficult to model. This gap has long served as the primary bottleneck for sensory science and industrial automation.

Today, this boundary is dissolving. Through the convergence of Vision Language Action (VLA) models, high density tactile systems, and vertical mechanical integration, we are witnessing the emergence of "Physical AI," systems capable of deconstructing complex intent into precise, embodied action.

The resolution of the Moravec Gap is not merely a hardware achievement; it is a shift in the methodology of machine learning. Historically, robotics relied on isolated perception action loops and hard coded rules. The current paradigm utilizes "Large Behavior Models" that treat physical actions as tokens within a multimodal sequence.

By leveraging internet scale data, encompassing billions of frames of human motion and semantic world knowledge, these systems have moved past the limitations of scripted automation. They now deconstruct a command not as a series of coordinates, but as a sequence of de-risked sub tasks. This is the difference between a tool that follows instructions and an infrastructure that understands affordance.

In the context of sensory and consumer science, the closing of the Moravec Gap represents a structural transformation. Historically, sensory research has struggled with the disconnect between qualitative human experience and quantitative data infrastructure. Reports were often one-off snapshots, failing to compound into a living body of knowledge.

The emergence of high-density tactile sensing and Embodied Reasoning allows us to ground AI in the physical reality of human experience. When a system can "feel" the micro vibrations of a product or the subtle resistance of a texture, it moves from a state of estimation to a state of validation. This provides the scientific rigor required to justify research investment at the executive level while accelerating the transition toward simulation-informed product development.

By treating sensory data as a foundational component of a Vision-Language Action sequence, organizations can build a global infrastructure where insight is not merely delivered but is built into the workflow. This ensures that methodological rigor is maintained even as systems scale, allowing consumer science teams to lead the strategy rather than simply supporting it.

Google DeepMind’s Gemini Robotics 1.5 (GR 1.5) provides a primary example of this "Thinking VLA" paradigm. Its core innovation lies in its agentic orchestration, utilizing a dual model framework to bridge the gap between abstract planning and physical execution.

The Embodied Reasoning (ER) component focuses on visuo spatial temporal understanding. It deconstructs a command, such as "organize this laboratory sample set by viscosity," into a strategic plan, estimating the weight and material properties of objects before a limb even moves.

Once the strategy is established, the VLA model executes the motion. Crucially, the "Thinking VLA" capability generates an internal sequence of reasoning in natural language during execution. This transparency allows the system to detect its own failures and propose recovery behaviors in real-time, transforming a "black box" process into a rigorous, auditable workflow.

The mechanical framework for this intelligence has reached a zenith of integration in platforms like the Tesla Optimus Gen 3 and Figure 03.

The Optimus Gen 3 represents a significant leap in mechanical degrees of freedom (DOF). Its 22-DOF hand system, driven by tendons and actuators relocated to the forearm, mimics human muscular anatomy. This allows for a level of agility required for the manipulation of delicate sensory components or complex industrial assembly. With joint accuracy of 0.05 degrees and response times of 3 milliseconds, the hardware is now capable of keeping pace with high-speed cognitive reasoning.

Similarly, Figure 03 demonstrates the transition from engineering prototype to mass-manufactured asset. By re-architecting electronics to reduce complexity and incorporating "soft goods" for domestic and industrial safety, Figure has built a platform designed for 10-hour operational shifts. Their Helix intelligence model leverages high-bandwidth tactile data to ensure that manipulation is as reliable in unstructured environments as it is on a controlled factory floor.

The "touch gap" has long been the final barrier to overcoming Moravec’s Paradox. While vision can map a room, it cannot sense the subtle deformation of a plastic container or the micro-vibrations of a tightening seal.

Breakthroughs in tactile infrastructure are now providing the "closing loop" required for high-fidelity manipulation:

The development of neuromorphic robotic electronic skin (NRE-skin) has even introduced local reflex arcs. These systems encode tactile stimuli into pulse trains, allowing robots to react to excessive force or damage with human like reflexes, a critical safety feature for human AI collaboration.

The convergence of VLA models and advanced hardware shifts the economic conversation. We have moved beyond the question of "will humanoids work" to "how quickly can we build the infrastructure to lead."

In sensory science and research, the impact is profound. Fleet learning ensures that every unit in a network benefits from the whole's collective experience. When one system learns a more efficient methodology for a task, that skill is immediately available to the global infrastructure.

We are entering an era where sensorimotor skills are no longer a computational bottleneck. By providing robots with the ability to "feel" their surroundings and "think" through their actions, we have moved past the era of the scripted puppet. We are now building autonomous physical agents, the systems that will define the future of research and industry.

Aigora is not interested in the "quick win" of automation. We are focused on the methodology, the rigor, and the transformation of research into a scalable, intelligent infrastructure. Our objective is to empower sensory science teams to translate complex human experience into the permanent technical infrastructure of their organizations.

Aigora is a contributor to the Aigora blog, sharing insights on AI-powered sensory science and product development.