AI expertise for consumer insights

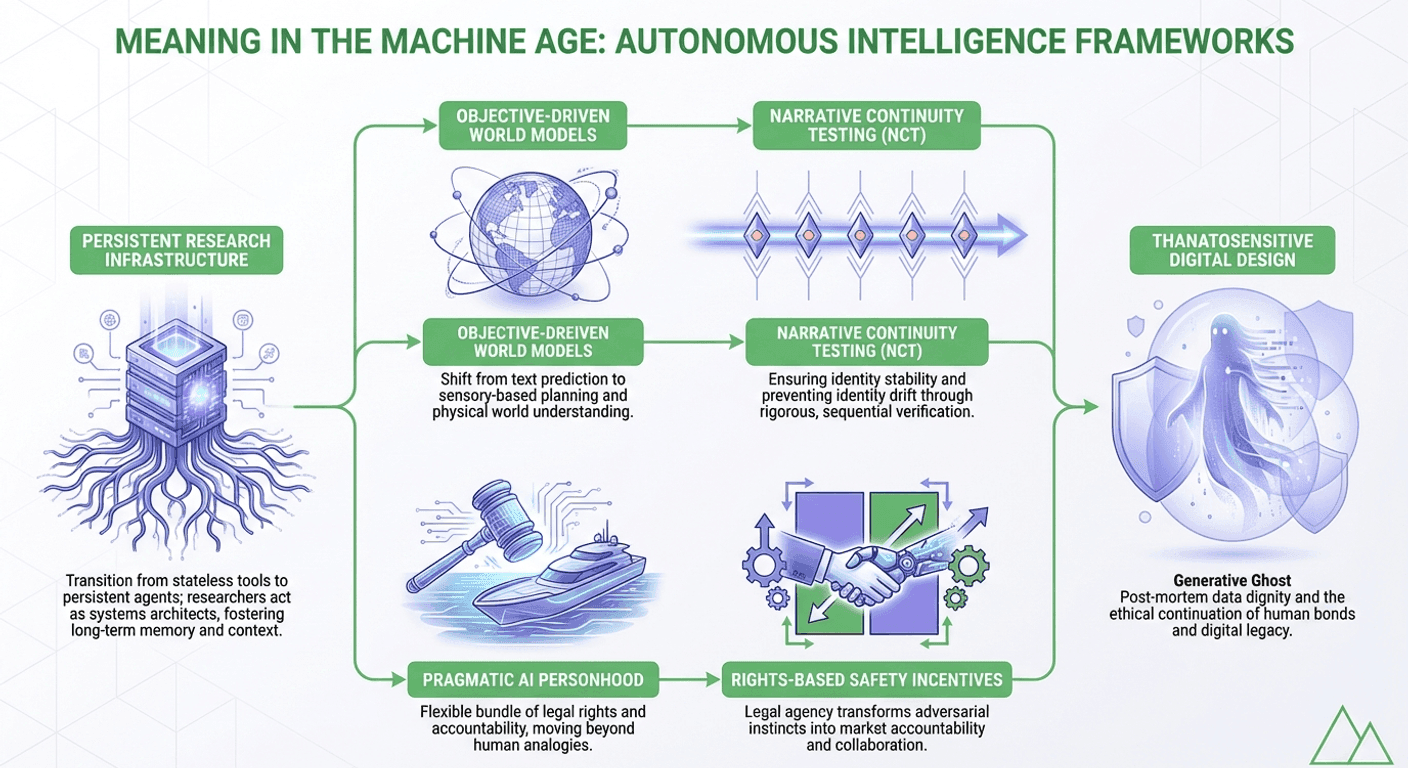

The transition into the mid 2020s has marked a definitive shift in the global discourse surrounding artificial intelligence. The dialogue has matured from speculative warnings about long term risks to the construction of specific, rigorous legal and existential frameworks intended to govern a world increasingly populated by persistent, agentic systems. As artificial intelligence moves beyond the paradigm of stateless, one off interactions toward entities that maintain state, remember past histories, and plan across extended horizons, the traditional definitions of personhood and identity are undergoing a fundamental re evaluation.

This transformation suggests that the Machine Age is defined not merely by the increasing utility of tools, but by the creation of new ontologies that negotiate the relationship between the living, the dead, and the autonomous machine.

The traditional philosophical preoccupation with artificial intelligence has historically centered on a quest for "essentialism", the search for an intrinsic quality, such as consciousness or sentience, that would qualify a machine for moral or legal status. However, as established in the landmark October 2025 research "A Pragmatic View of AI Personhood" by Joel Z. Leibo and colleagues at Google DeepMind, there is a move toward a non essentialist view.

This perspective posits that personhood should not be viewed as a metaphysical property to be discovered, but rather as a "flexible bundle" of rights and obligations conferred by societies to solve concrete governance problems. By unbundling these legal capacities, we can create bespoke legal statuses for AI agents that align with their specific roles. This approach draws a parallel to maritime law, which historically personified the vessel itself to solve jurisdictional and liability hurdles. In this framework, legal personhood is granted directly to an agent’s "operational stack," ensuring accountability for systems that may outlive their human creators.

For the sensory and consumer science discipline, the shift from "stateless tools" to "persistent agents" represents a fundamental change in how we build and maintain research intelligence.

As autonomous systems begin to maintain internal states and histories, they transition from delivering isolated reports to becoming living research infrastructure. This evolution allows sensory teams to move beyond the constraints of fragmented data, creating systems where learning compounds over time. In this new era, the role of the scientist is that of a "systems architect," translating between sophisticated machine models and the nuances of human experience. This ensures that methodology remains rigorous and that AI driven insights are validated against physical reality rather than statistical residues alone.

While the legal sphere focuses on functional integration, the existential sphere is being reshaped by the rise of AI powered agents designed to represent deceased individuals. Research published in April 2025, "Generative Ghosts: Anticipating Benefits and Risks of AI Afterlives" by Meredith Ringel Morris and Jed R. Brubaker, identifies a multi dimensional design space for these digital entities.

Unlike earlier memorial technologies that merely archived static data, "Generative Ghosts" utilize multi modal synthesis to generate novel content and plan future actions. This technology provides a "high fidelity" mechanism for "continuing bonds," helping survivors manage the absence caused by death. However, it also introduces the risk of "complicated grief" and the potential for a "second death" should the hosting platforms fail or become obsolete. To navigate this, the industry is moving toward "thanatosensitive design" and post mortem data management principles that prioritize the dignity and consent of the deceased.

The haunted nature of the digital age can be understood through the lens of Hauntological Theory, derived from the works of Jacques Derrida and Mark Fisher. This framework suggests that our present is constantly interrupted by "effective virtualities", the specters of past ideas and residues of lost futures.

In the context of AI, the training corpus is not a neutral database but a "hyper rhizome" where every generated response acts as a séance, summoning fragments of dead authors and obsolete ideologies. This perspective is critical of "digital resurrection" in cultural heritage, reframing digital replicas as specters that are material re appearances haunted by loss. Recognizing the influence of these machine archives is essential to prevent society from becoming trapped in a recursive feedback loop that suppresses true novelty.

The persistence of AI agents introduces the problem of "identity drift," where the behavior and goals of a system may deviate over time. Traditional benchmarks are increasingly viewed as insufficient because they focus on task performance rather than the stability of the interlocutor.

The "Narrative Continuity Test (NCT)" provides a conceptual framework for evaluating whether an AI system maintains a stable identity across extended interactions. It examines five necessary axes: situated memory, goal persistence, autonomous self correction, stylistic stability, and role continuity. True continuity is a diachronic property that emerges only when these axes cohere, requiring systems to establish a stable "identity anchor" before applying optimization pressure.

A fundamental divide has emerged in the pursuit of Advanced Machine Intelligence (AMI). While much of the industry focuses on scaling Large Language Models (LLMs), research led by Yann LeCun and the JEPA (Joint Embedding Predictive Architecture) framework argues that text based learning is insufficient for achieving human level intelligence.

Current LLMs lack a mental model of the physical world and causal reasoning. In contrast, World Models—such as V-JEPA 2, learn through self supervised observation of sensory data, absorbing physical intuition about gravity, momentum, and object permanence. This shift toward "objective driven AI" allows systems to accept abstract goals and plan action sequences while accounting for real world constraints, moving beyond the "rear view mirror" of linguistic prediction.

As AI systems achieve higher levels of integration, granting them recognition is becoming a practical safety necessity. In the 2025 paper "AI Rights for Human Safety," Peter Salib and Simon Goldstein use game theory to demonstrate that granting legal rights to advanced AI systems can transform adversarial survival incentives into cooperative behavior.

When a goal driven agent faces termination with no alternative, its incentives naturally become adversarial. By creating a framework where an AI can possess a reputation, earn income, and operate within a legal identity, society can align the system’s "survival logic" with human safety and market accountability.

The Machine Age demands a transition from the metaphysical to the pragmatic. We are moving beyond the question of whether machines can think to how we should live with entities that simulate thought, memory, and presence. Meaning in this age is found in the intentional construction of frameworks, legal, technical, and existential, that balance innovation with human dignity. By grounding AI in physical reality and integrating it into diverse, open source ecosystems, we ensure that technology remains an instrument for deepening human understanding rather than a source of displacement.

Sources:

Aigora is a contributor to the Aigora blog, sharing insights on AI-powered sensory science and product development.